|

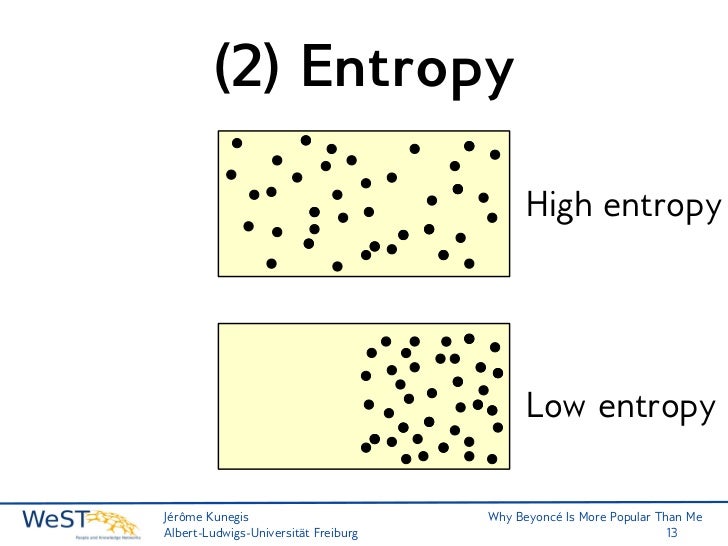

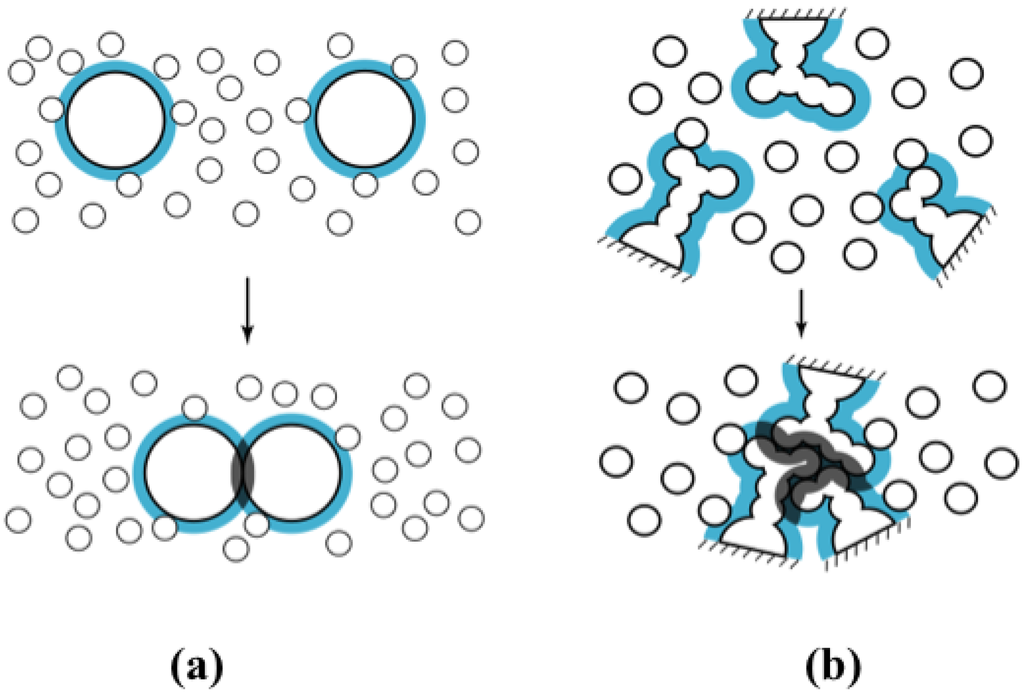

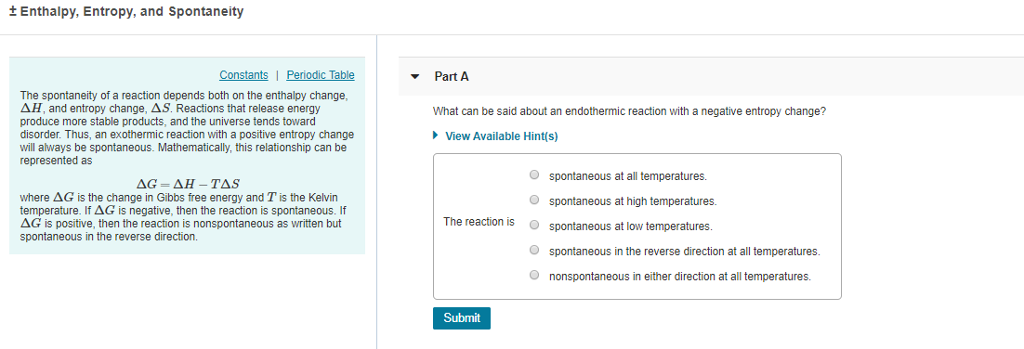

The conversion method you choose will affect the results you get, however. If your numbers really are just one and zeros, then convert your bitstream into an array of ones and zeros. Just how you decide on what those numbers are is domain specific. What you really want is your bitstream converted into a string of numbers. This is how a text file usually looks, graphing entropy against the file contents: This is how an encrypted file looks (ignore the tiny spike to low entropy, it was caused by some header information or equivalent): Encrypted files have entropy. Entropy being a measure of 'randomness' of the file. This may or may not be the case for your problem domain. Entropy of the encrypted file is maximised. Note that this implementation assumes that your input bit-stream is best represented as bytes. Return -1.0 * length * prob * math.log(prob) / math.log(2.0) "Calculates the ideal Shannon entropy of a string with given length" "Calculates the Shannon entropy of a string" Here is a simple implementation in Python, shamelessly copied from the Revelation codebase, and thus GPL licensed: import math The system will always tend towards a lowest energy configuration and this happens to be the configuration where the system is spread out.Shannon's entropy equation is the standard method of calculation. This is a crude analogy for how energy is impacted by entropy. In order to minimise energy, you are spreading your system out. In this sense, you have reduced the energy required at the expense of losing information about where the ball is. But if you had put all of them in one box then you can tell exactly where it is (by lifting the box say). Notice however, that if now someone closes all the boxes and asks you where ball number $k$ is, you can’t say where it is. The least energy is expended when we share the balls equally in all boxes. However, if now there are two such boxes it is easier to put $n/2$ in each box than to put all $n$ in each (or any other configuration for that matter). With one box there is not much choice and we have to brave through and put all $n$ balls inside. However, more the number of balls inside the box, the harder it is to add more. Your goal is to put the balls inside the box. And you have $n$ balls numbered from $1$ to $n$. Entropy was originally intended to operate with probabilistic uncertainty, but today, in decision making, we deal with a wide spectrum of uncertainties: interval, fuzzy, type 2 fuzzy, interval-valued fuzzy, intuitionistic fuzzy, hesitant fuzzy, evidential (DempsterShafer theory of evidence), etc. Now imagine increasing the temperature by a small amount $dT$, which will increase the average total energy $U$ of the gas by an amount $dU = \frac$. Here is a mathematically precise real-world example: Consider a monoatomic ideal gas of $N$ atoms in a box of constant volume $V$, in contact with an environment of temperature $T$. so how is entropy, a statistical idea, playing a role in the energy? So, assuming that entropy is a statistical idea (which is where I think my gap in understanding may be), how does forming a hydrogen bond, or being ordered in general, "destabilize"/increase the energy? Why does being ordered - which is simply just a small probability event actually occurring - impact the energy of a system? It's not like some outside force is inputting energy into the system to align and order the molecules. rather, it's more of a statistical observation. A paper that my professor once showed me makes it clear that entropy isn't a measure of energy density/distribution. I'm having a difficult time understanding how we can relate the idea of entropy to energy. But this entropy cost is offset by a greater decrease in enthalpy, bringing the overall $ΔG$ down (so it's negative), and therefore making the bond "stabilizing". However, when hydrogen bonds form, the $ΔS$ actually decreases there is more order when molecules align to form H-bonds. We call the formation of hydrogen bonds as being "stabilizing" - that is, they lower the overall energy $ΔG$. Now, before I explain my confusion, I'd like to share an example from my course: hydrogen bonding (non-covalent interactions. There are many formulas that relate entropy to energy.

However, in my understanding, the idea is still the same: increasing entropy is really just a statistical likely-hood that will hold true on average.

Of course there's a more elaborate definition involving macrostates and microstates, where entropy is greater when a macrostate has more microstates. Therefore, on average, they will spread around and entropy is increased. For example, if you have a vacuum inside of a box and you place a handful of gas atoms on one side, the molecules have a higher statistical probability to spread out, versus remain in one concentrated spot. Conceptually, I've always understood entropy to be a statistical idea.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed